Semantic Big Data Analytics

Spinning a hadoop cluster by lunch and a big Whoa by dinner. Just another day @ AI.

Spinning a hadoop cluster by lunch and a big Whoa by dinner. Just another day @ AI.

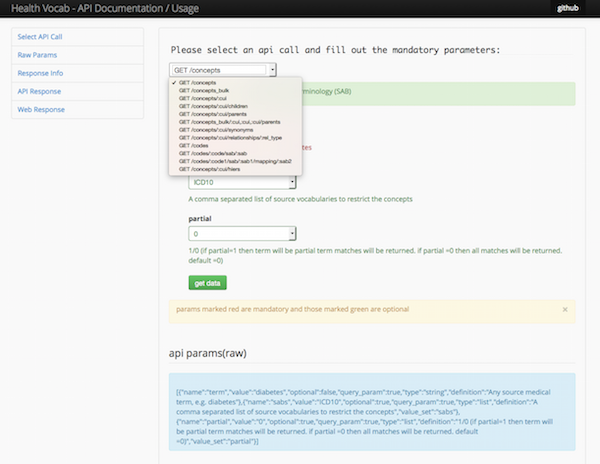

Both “Semantics” and “Big data” are not just buzzwords for us at AI, but fundamental approaches to handling large datasets containing lexically heterogeneous but semantically equivalent entities. Our Health Vocabulary API (HaVoc – Health Vocabulary API) provides RESTful access to biomedical terminologies to perform specialized semantic operations, such as a) link two records one coded as “hypertension” and another as “high blood pressure” or b) group records by semantic concept hierarchy (for example, retrieve all records for conditions under the group cardiovascular diseases).

Beyond the semantics, we have developed a proprietary in house data processing framework called Garbanzo to support the entire ETL, integration, analytics and query. Our record linkage / de-duplication algorithms run on large Apache Spark clusters and produce blazingly fast results with high specificity and sensitivity.

Like all other endeavors, we have much to look forward to as we grow our capabilities to integrate clinical, research and personal self-quantification datasets to produce meaningful insights.

ResearchTracker combines public data from ClinicalTrials.gov, NIH Grant (Reporter database), PubMed and Sunshine Act Open Payment data, it integrates the research investigators and sites using record linkage algorithms and provides a dashboard of latest research activity at a given research university. It also enables aggregation of various stats for a keyword in delayed real-time.It also enables aggregation of various statistics for any medical disease or treatment keyword.

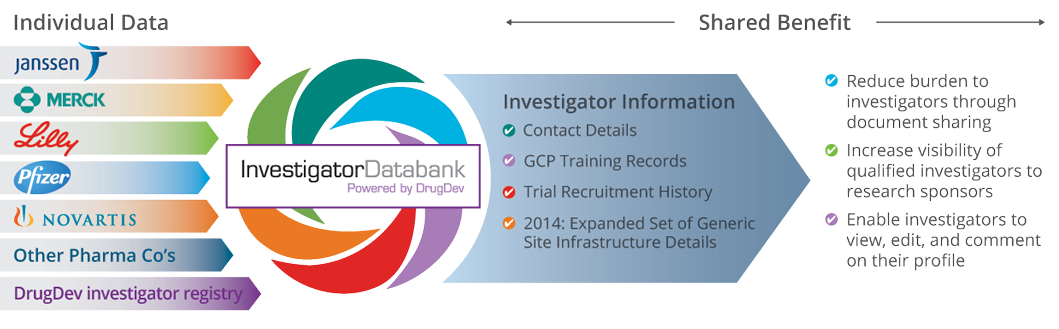

As a client solution, we developed the Investigator Databank to enable pharma companies including Pfizer, Merck, Lilly, Janssen and Novartis to share site and investigator metrics with each other. The Databank uses our data matching, record linking and semantic search technology to integrate and query millions of investigator and site records across these member companies, in a manner that safeguards their privacy clauses. We also extended the solution to develop the Transcelerate Investigator Registry solution used by 21 pharma companies across the world.

Garbanzo framework is a data processing toolkit that underscores all our big data work, it has been developed after years of fine-tuning and abstracting repetitive tasks in data science. We have amassed a library of functions to clean data, organize data, integrate with external databases and enhance data.